Pandas to spark

This is a short introduction to pandas to spark API on Spark, geared mainly for new users. This notebook shows you some key differences between pandas and pandas API on Spark. Creating a pandas-on-Spark Series by passing a list of values, letting pandas API on Spark create a default integer index:.

Pandas and PySpark are two popular data processing tools in Python. While Pandas is well-suited for working with small to medium-sized datasets on a single machine, PySpark is designed for distributed processing of large datasets across multiple machines. Converting a pandas DataFrame to a PySpark DataFrame can be necessary when you need to scale up your data processing to handle larger datasets. Here, data is the list of values on which the DataFrame is created, and schema is either the structure of the dataset or a list of column names. The spark parameter refers to the SparkSession object in PySpark. Here's an example code that demonstrates how to create a pandas DataFrame and then convert it to a PySpark DataFrame using the spark. Consider the code shown below.

Pandas to spark

As a data scientist or software engineer, you may often find yourself working with large datasets that require distributed computing. Apache Spark is a powerful distributed computing framework that can handle big data processing tasks efficiently. We will assume that you have a basic understanding of Python , Pandas, and Spark. A Pandas DataFrame is a two-dimensional table-like data structure that is used to store and manipulate data in Python. It is similar to a spreadsheet or a SQL table and consists of rows and columns. You can perform various operations on a Pandas DataFrame, such as filtering, grouping, and aggregation. A Spark DataFrame is a distributed collection of data organized into named columns. It is similar to a Pandas DataFrame but is designed to handle big data processing tasks efficiently. Scalability : Pandas is designed to work on a single machine and may not be able to handle large datasets efficiently. Spark, on the other hand, can distribute the workload across multiple machines, making it ideal for big data processing tasks. Parallelism : Spark can perform operations on data in parallel, which can significantly improve the performance of data processing tasks. Integration : Spark integrates seamlessly with other big data technologies, such as Hadoop and Kafka, making it a popular choice for big data processing tasks. You can install PySpark using pip:. A SparkSession is the entry point to using Spark. It provides a way to interact with Spark using APIs.

For information on the version of PyArrow available in each Databricks Runtime version, see the Databricks Runtime release notes versions and compatibility.

To use pandas you have to import it first using import pandas as pd. Operations on Pyspark run faster than Python pandas due to its distributed nature and parallel execution on multiple cores and machines. In other words, pandas run operations on a single node whereas PySpark runs on multiple machines. PySpark processes operations many times faster than pandas. If you want all data types to String use spark. You need to enable to use of Arrow as this is disabled by default and have Apache Arrow PyArrow install on all Spark cluster nodes using pip install pyspark[sql] or by directly downloading from Apache Arrow for Python. You need to have Spark compatible Apache Arrow installed to use the above statement, In case you have not installed Apache Arrow you get the below error.

To use pandas you have to import it first using import pandas as pd. Operations on Pyspark run faster than Python pandas due to its distributed nature and parallel execution on multiple cores and machines. In other words, pandas run operations on a single node whereas PySpark runs on multiple machines. PySpark processes operations many times faster than pandas. If you want all data types to String use spark. You need to enable to use of Arrow as this is disabled by default and have Apache Arrow PyArrow install on all Spark cluster nodes using pip install pyspark[sql] or by directly downloading from Apache Arrow for Python.

Pandas to spark

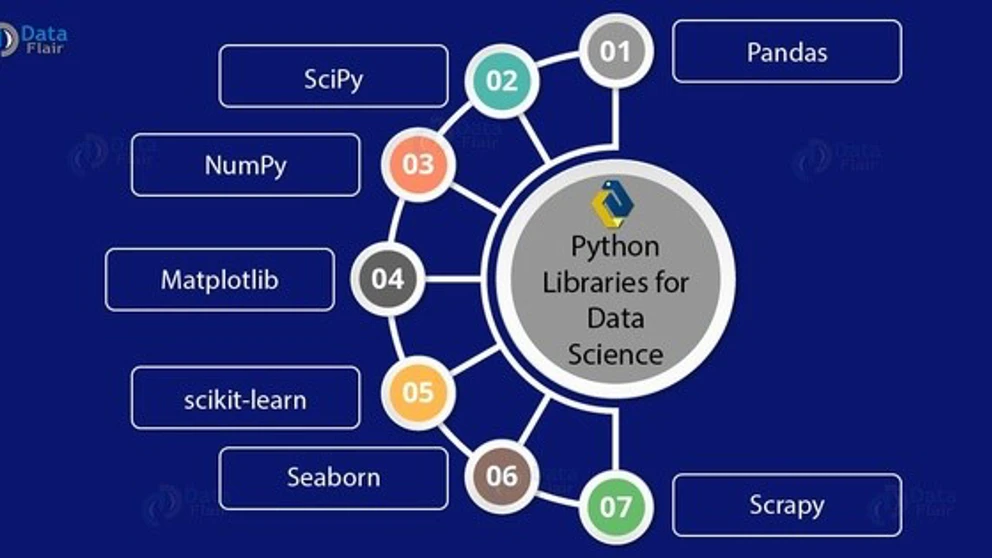

You can jump into the next section if you already knew this. Python pandas is the most popular open-source library in the Python programming language, it runs on a single machine and is single-threaded. Pandas is a widely used and defacto framework for data science, data analysis, and machine learning applications. For detailed examples refer to the pandas Tutorial.

Solo stove hub

Showing the data in the form of. Like Article Like. Apache Spark is a powerful distributed computing framework that can handle big data processing tasks efficiently. To run the above code, we first need to install the pyarrow library in our machine, and for that we can make use of the command shown below. How to slice a PySpark dataframe in two row-wise dataframe? When an error occurs, Spark automatically fallback to non-Arrow optimization implementation, this can be controlled by spark. Note that the data in a Spark dataframe does not preserve the natural order by default. In this blog, he shares his experiences with the data as he come across. Create the DataFrame with the help. Scalability : Pandas is designed to work on a single machine and may not be able to handle large datasets efficiently.

SparkSession pyspark.

For example, you can enable Arrow optimization to hugely speed up internal pandas conversion. DataFrame np. You can create a SparkSession using the following code:. Work Experiences. It is similar to a Pandas DataFrame but is designed to handle big data processing tasks efficiently. Admission Experiences. Share your suggestions to enhance the article. Finally, we use the spark. We then create a SparkSession object using the SparkSession. A float64 B float64 C float64 D float64 dtype: object. This is a short introduction to pandas API on Spark, geared mainly for new users. Help us improve. Example 2: Create a DataFrame and then Convert using spark.

In my opinion you are not right. I am assured. Let's discuss. Write to me in PM, we will talk.